|

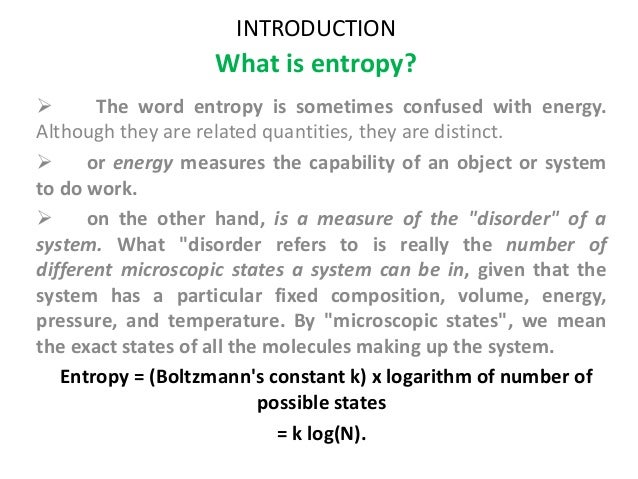

11/21/2023 0 Comments Entropy def To transform model output into a probability of class membership given $i$ potential classes, a softmax function is used. weights def get_gradient ( self ): return self. t e In information theory, the entropy of a random variable is the average level of 'information', 'surprise', or 'uncertainty' inherent to the variables possible outcomes. grad_input def get_weights ( self ): return self. sum ( grad_output, axis = 0, keepdims = True ) '''Update weights and bias via SGD''' self. bias def backward ( self, value, grad_output ): '''The backward pass computes the contribution of this layer to the overall loss function, given by''' self. n_outputs )) def forward ( self, value ): return value self. After mean forces of the free energy with respect to the CVs were determined from restrained QM/MM molecular dynamics (MD) simulations, steepest descent. initialize_weights () def initialize_weights ( self ): self. n_inputs: batch size, equal to number of samples processed each iteration. Choose sufficently small values to ensure timely convergence. Parameters: - learning_rate: speed of updating weights W. Ġ.003125 0.003125 0.003125 0.003125 0.003125 0.003125 0.003125 0.003125]]Ĭlass Dense ( Layer ): def _init_ ( self, n_inputs, n_outputs, learning_rate = 0.001 ): '''A functional approximation machine to learn weights W in f(x) = XW + b such that f(x) approximates target values y as closely as possible, accoarding to some criteria. eye ( n_units ) return grad_output d_layer_d_input Nevertheless, the proper matrix math is shown here for completeness. By default, the identity function is used for activation in the forward pass, so the Jacobian is simply an n x n identity matrix. * d layer 0 / d value At each layer only a single term in the product is computed, taking the previous computations as an input. Given k layers, the chain rule for the derivative of the whole graph is written: d loss / d value = d loss / d layer * d layer k / d layer (k-1). Takes the previous layers gradient as an argument to compute efficently. Identity function is used by default.''' return value def backward ( self, value, grad_output ): '''Compute derivative of the loss function from right to left in the computational graph, with respect to a given input (backprop). Empty by default''' pass def forward ( self, value ): '''Compute the forward pass on the computational graph. ''' def _init_ ( self, * kwargs ): '''Used to store layer variables, e.g. Take it into your hands, shut your eyes, and twist the sides around randomly a few times.Class Layer : '''Basic neural network class with a forward and backward pass functions. 4.1 How to understand Shannon’s information entropy Entropy measures the degree of our lack of information about a system. We have changed their notation to avoid confusion. Unfortunately, in the information theory, the symbol for entropy is Hand the constant k B is absent. Imagine a 2x2 Rubik's cube, solved so that each face contains just one colour. This expression is called Shannon Entropy or Information Entropy. Saturated Unsaturated and Supersaturated.Reaction Quotient and Le Chatelier's Principle.Prediction of Element Properties Based on Periodic Trends.Molecular Structures of Acids and Bases.Ion and Atom Photoelectron Spectroscopy.Elemental Composition of Pure Substances.Application of Le Chatelier's Principle.Structure, Composition & Properties of Metals and Alloys.Intramolecular Force and Potential Energy.Variable Oxidation State of Transition Elements.

Transition Metal Ions in Aqueous Solution.Single and Double Replacement Reactions.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed